Something interesting is happening to the internet, and most people can’t see it - literally.

Visit any major retailer’s website in a browser and you’ll see what you’ve always seen: product images, navigation menus, banners, reviews. Now visit that same URL as an AI agent, and you are increasingly likely to get something entirely different: a structured version of the same content, optimized not for human eyes but for machine consumption. We are seeing a shadow web, built just for machines, being constructed at a rapid pace.

Consider what this looks like in practice. Today, if you ask an AI agent to find running shoes under $150, it scrapes an HTML product page designed for human eyes, guesses at pricing from visual layouts, and has no reliable way to check return policies or verify inventory. In the machine-native web, the agent reads a structured merchant profile, evaluates products against declared attributes, authenticates with a cryptographic credential, and completes the purchase, all using infrastructure purpose-built for this interaction. That infrastructure is what’s being built right now.

We’re seeing new formats, protocols, and systems emerge across the web, from structured site indices like llms.txt, to payment systems to authentication. Taken individually, each of these is a product feature or a technical standard. Taken together, they describe something much larger: the emergence of a parallel web. A third, shadow layer of content, discovery mechanisms, payment systems, communication protocols, and identity infrastructure that is being built alongside the human internet, but designed from the ground up for a different kind of participant.

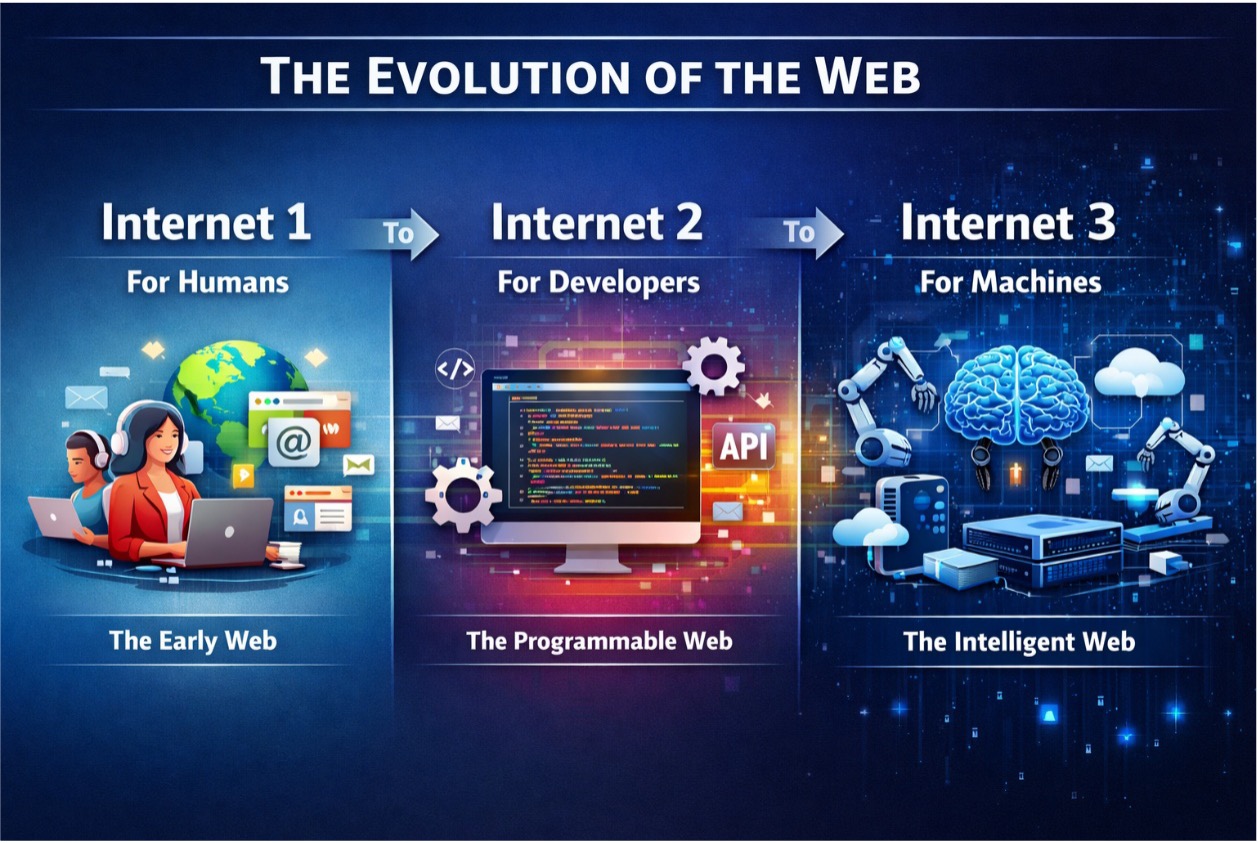

We’re calling this the machine layer of the Internet. The first layer was built for humans, and showed up in forms like browsers or HTML and designed for people to read and click. The second was built for developers, and showed up in APIs, data formats, and infrastructure that let software systems integrate with each other. The third is being built for machines: AI agents, automated systems, and protocols that can discover, transact, communicate, and pay as native participants in the web, without a human in the loop. This encompasses, but is much broader than, the current “agentic” buildout driving tech headlines. What’s emerging is infrastructure for machines as a category, with agents as the most visible and commercially significant use case today.

As early-stage investors, we’re poised to see many trends as they are just beginning. And as fintech investors, we have a front row seat to the most dramatic rewiring of commerce that we’ve seen in our investing careers. We’re seeing a structural shift occurring in real time. Companies that adopt this new architecture will gain an advantage as they are first to establish their products and services into discovery and engagement channels resulting from a massive change in consumer behavior. Companies that don’t will get disintermediated. And the speed at which it’s being assembled suggests the window to shape it is narrow.

Instead of a single protocol, we’re seeing a stack of multiple layers being built simultaneously by different companies, often in competition with each other, that together represent the most significant evolution in web architecture since the rise of APIs. What makes it interesting is that at every layer, what’s being built either sits alongside or directly on top of the existing human web. These are parallel versions of things the internet already does, redesigned for machines.

The web today has been built for humans. Whether it’s a news article or a product catalog, it is designed for visual display in a browser, packed with navigation, ads, and layout code. Machines need a cleaner way to read the web and find what’s available on it. What has emerged is a wave of shadow artifacts: parallel versions of web content and commerce infrastructure, created for machine consumption, that sit alongside the human-facing web.

Whether it’s a news article or a product catalog, the pattern is the same: a parallel, machine-readable version is being created alongside the human one. Their breadth of use cases and simultaneous arrival confirms that the human-readable web is suboptimal when the shopper is a machine.

As agents take on more complex tasks, they increasingly need to work with other systems, such as tools and other agents, in combinations that can’t be fully anticipated in advance. This is a fundamentally different problem than APIs were designed to solve. APIs are static: a developer decides at build time which systems need to connect, writes the integration, and ships it. But agents operate in contexts where the tools and counterparts they need only become clear as a task unfolds. That requires a new kind of infrastructure built is native to the machine web. Agents don’t browse a website to understand what a company does. They read an Agent Card, which is a structured capability description with no human-facing equivalent. They don’t read API documentation to figure out how to use a tool. They read an MCP server manifest. These are shadow artifacts in the same sense as llms.txt or a UCP merchant profile.

We’re only scratching the surface of what it means for agents to interact autonomously, outside the parameters of human control, but it is both powerful and uncharted territory.

As agents discover products and engage with one another, the mechanisms to pay for goods and services break down. This is in part because the existing paradigm was built around human-initiated transactions, including all of the downstream implications around approvals, liabilities, and authentication. The existing systems also fall short because as we move towards a model where microtransactions play a greater role - whether as a replacement for the traditional advertising-based model of content monetization or around merchant payments - traditional credit card economics no longer works. We are seeing infrastructure emerge that addresses all of these points.

This is the first time in over two decades that we can see the potential for a platform shift in payments. The web’s economics have been shaped by an infrastructure constraint masquerading as an inevitability. Because there was no practical way to charge fractions of a cent for content or data, the web defaulted to advertising. That default shaped everything: how websites are designed (for engagement), how publishers are incentivized (for clicks), and the rise of surveillance-driven targeting (because ads need data). Now consider as AI agents become significant consumers of web content, they represent an audience that literally cannot see ads. Direct monetization of content will rapidly increase. At the same time, as agents increasingly engage one another for services, another use case for micropayments will open up. x402 and stablecoin-based settlement make that viable at fractions of a cent per request, opening entirely new vectors of value exchange, from per-article content licensing to agent-to-agent payments for tasks.

Trust and authentication are the least developed areas, but critical to get right as we think about scaling interactions on top of the layers we’ve just discussed. For a given transaction, or piece of content consumed, or purchase being marketed, it all assumes that the machine counterpart is a good actor. As more activity takes place, we can expect more compromises around security and trust, as well as misinterpretations as we engage with entities that might not “assume” things in a way that humans do. We’re starting to see the foundations of this layer get set up.

These sit alongside the commerce-specific trust credentials discussed in the payments section such as agentic tokens and Secure Payment Tokens that handle authorization for transactions. Together, they form the beginnings of an identity stack: cryptographic proof of who an agent is (Web Bot Auth), what it’s allowed to do with content (Content Signals), and what it’s authorized to spend (agentic tokens and SPTs).

We should also note that this is very early. Fraud, authentication, and security have always been a game of whack-a-mole, and we expect any early success here to become eroded away as fraudsters get smarter and as consumer adoption of agentic services increases. Expect more and more emphasis to be in this area as activity in the machine economy picks up.

The speed at which this stack is being assembled is one of the most striking features of the entire trend. As the timestamps above illustrate, nearly every protocol and product discussed in this paper launched between late 2024 and early 2026. The entire stack, from discovery to communication to payments to identity, went from concept to production in roughly fourteen months.

The API layer took roughly a decade to mature. The mobile web took several years from the iPhone to responsive design and app stores. The third layer is being developed in a fraction of that time, in part because the builders are companies that ship production infrastructure as a core competency, and in part because the demand signal is unmistakable: nearly half of US shoppers already use AI tools for shopping, agentic traffic is surging, and the rise of vibe coding will only accelerate machine-to-machine interactions. Although usage today is in its infancy, laying this infrastructure is the first critical component to drive adoption and scale.

This creates an advantage for first movers. Whether that is a startup building in the space, a retailer thinking about how to surface products, or a content creator trying to improve discoverability, the window where the architecture is still being defined is the window of maximum opportunity.

If the stack above is what’s being built, the question is: what does it change? Six implications stand out.

The economic terms of the web are being renegotiated.

The advertising model that has underwritten content for three decades is being challenged by agents. SaaS pricing built around human seats doesn’t hold when the user is a machine. How content is licensed, how and where discovery is monetized, who captures margin when an agent intermediates a transaction are all open questions being answered right now.

Perhaps more profoundly, we’re starting a cycle by which agents will generate artifacts to be consumed by other agents. This rapidly moves towards a model where humans are neither the intended audience nor the economic participants, and discovery and exchange occurs among themselves. This is a radically different model from what we’ve ever seen before.

Discovery resets to zero.

Every incumbent with a distribution advantage built on the human web (search rankings, app store placement, marketplace positioning, SEO-driven content strategies) faces a world where machines discover products and services through structured data, not keyword search or visual browsing. These advantages were built over decades and became self-reinforcing. Breaking in as a new entrant meant competing against compounding incumbency. The shift to machine-native discovery arrests that flywheel. When an agent evaluates structured profiles rather than search results, the accumulated weight of legacy rankings matters less, and the companies that publish the best machine-readable data can emerge as new winners regardless of where they sat in the old hierarchy. These moments of reshuffling are rare, and they don’t last long. New advantages around preferred ranking and discovery will compound just as quickly as the old ones did.

Brand and first-party data become the durable moats.

When agents can evaluate hundreds of options in seconds against structured attributes, product comparison becomes a commodity. The durable differentiation is what agents can’t easily evaluate: brand affinity, loyalty, trust. First-party customer data such as purchase history, preferences, loyalty membership becomes the input that separates winners from the commodity race to the bottom.

New fraud categories will emerge, and existing infrastructure won’t catch them.

Agent impersonation, prompt injection at scale, page manipulation, and credential gaming are all new attack vectors that human-oriented fraud systems weren’t designed for. Until the trust layer matures, established brand and reputation serve as the primary proxy for trust.

The regulatory vacuum won’t last.

Regulators have largely been hands-off on any new rules or enforcement. This has been a tailwind to allow for massive growth and adoption. The companies shaping technical standards today will shape regulation later, and it will come. Startups should move fast while the window is open, and build to survive scrutiny when it closes.

The third layer is being built today. The shadow pages are live. The parallel storefronts are publishing. The payment rails are active. All of the scaffolding is in place for us to see a major shift in discovery, consumption and transactions.

The startups who build today, and the companies and creators who engage now, will define the next era of the internet. The standards are still being written, the economic terms are still fluid, and there is a reshuffling of the old guard that only takes place once every generation. The opportunity is there: act now or operate on the terms of those who are crafting that future today.

© 2026 Restive®, Inc.